AffectI: A Game for Diverse, Reliable, and Efficient Affective Image Annotation

Xingkun Zuo, Jiyi Li, Qili Zhou, Jianjun Li, Xiaoyang Mao

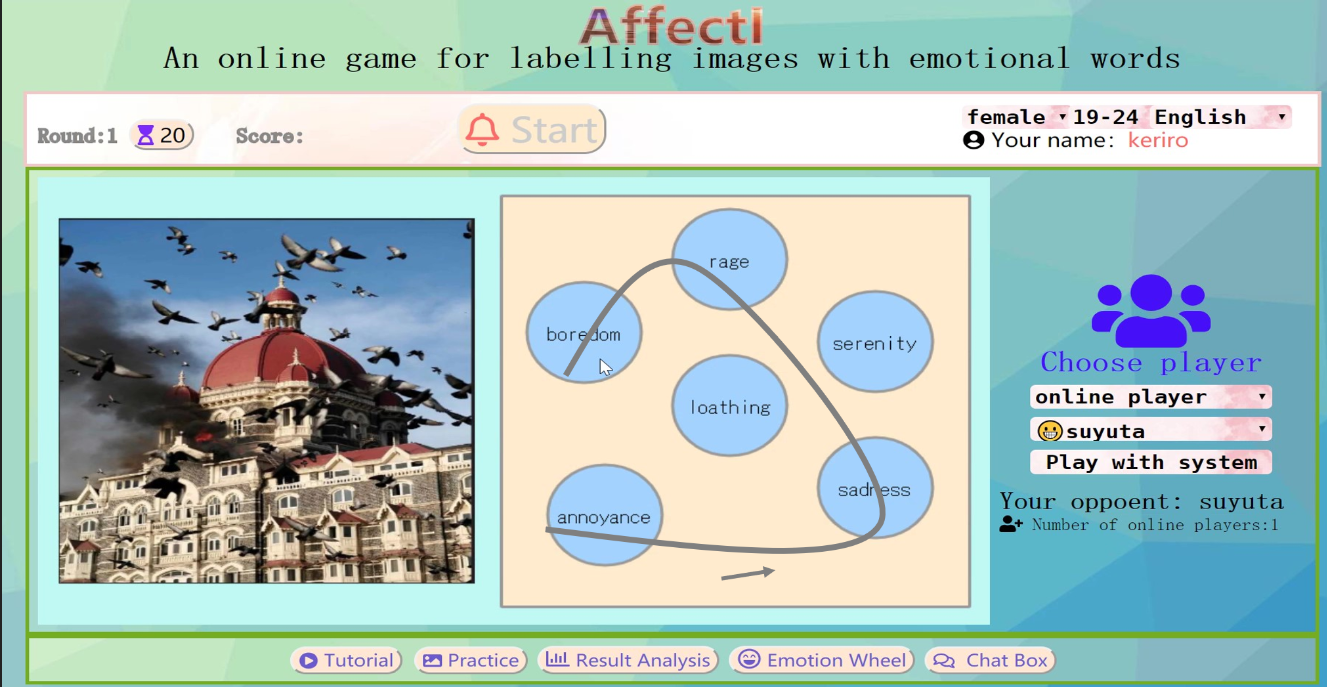

Interface

Abstract

An important application of affective image annotation is affective image content analysis, which aims to automatically understand the emotion being brought to viewers by image contents. The so-called subjective perception issue, i.e., different viewers may have different emotional responses to the same image, makes it difficult to link image features with the expected perceived emotion. Due to the ability to learn features in an end-to-end fashion, recent deep learning technologies have opened a new window on affective image content analysis, which has led to a growing demand for affective image annotation technologies required for building large reliable training datasets. This paper proposes a novel affective image annotation technique, AffectI, for efficiently collecting diverse and reliable emotional labels with the estimate emotion distribution for images based on the concept of Game With a Purpose (GWAP). AffectI features three novel mechanisms: a selection mechanism for ensuring all emotion words being fairly evaluated for collecting diverse and reliable labels; an estimation mechanism for estimating the emotion distribution by aggregating partial pairwise comparisons of the emotion words for collecting the labels effectively and efficiently; an incentive mechanism shows the comparison between current player and her opponents as well as all past players to promote the interest of players and also contributes the reliability and diversity. Our experimental results demonstrate that AffectI is superior to existing methods in terms of being able to collect more diverse and reliable labels. The advantage of using GWAP for reducing the frustration of evaluators was also confirmed through subjective evaluation.

Links

Video: [Watch online]

Paper: [PDF]

Presentation: [PDF]

Game: [Play now]

Acknowledgments

This work was partially supported by JSPS KAKENHI Grant Number 17H00738, 19H05472 and 19K20277; the National Science Fund of China Grant Number 61871170.

Reference

ACM MULTIMEDIA CONFERENCE 2020